More than two months since my last poast.

I’m not dead (sorry FEDs & those who had hoped otherwise). My absence has been due to a few things:

I’ve been busy with the team building Spirit of Satoshi, which I’ll talk a little bit more about below

I was travelling with Wifu, attending a couple conferences, BTCPrague and Oslo FF being the two main ones. Damn there is so much to love about Europe. It is and always will be the cradle of civilisation.

I’ve been enjoying meatspace, and staying offline. This has honestly felt so good. Alas, this necessary evil has dragged me back to say my 2c. I have signed a deal with the devil in building a technology company, and choosing to write online. Maybe in my next life, I’ll stick with being a carpenter like Jesus. He didn’t need to waste his time with Twitter nonsense. Or is it called “X” now? Jeez..smh

So what’s new???

A couple things. Let’s start with Spirit of Satoshi….

Building a Language Model

Our mission to build a more “based” language model continues. It’s proving to be more involved than even I had thought, not from a “technically complicated” standpoint, but more from a; “damn this is tedious” standpoint.

It’s all about Data. And not the quantum of data, but the quality and format of data. You’ve probably heard nerds talk about this, and you don’t really appreciate it until you actually begin feeding the stuff to a model, and you get a result….which wasn’t necessarily what you wanted.

The data pipeline is where all the work is. You have to collect and curate the data, then you have to extract it. Then you have to programmatically clean it (it’s impossible to do a first run clean manually).

Then you take this programmatically cleaned raw data and you have to transform it into multiple data formats (think of Q&A pairs, or semantically coherent chunks / paragraphs). This you also need to do programmatically, if you’re dealing with loads of data - which is the case for a Language Model. Funny enough, other language models are actually good for this task! You use language models to build new language models.

THEN, because there will likely be loads of junk left in there, and irrelevant garbage generated by whatever language model you used to programmatically transform the data, you need to do a more intense clean.

THIS is where you need to get human help, because at this stage, it seems humans are still the only creatures on the planet with the agency necessary to differentiate and determine quality. Algorithms can kind of do this, but not so well with language just yet - especially in more nuanced, comparative contexts.

In any case, to do this at scale is incredibly hard to do unless you have an army of people to help you. That army of people can be mercenaries paid for by someone like OpenAI who has more money than God, or they can be missionaries, which is what the Bitcoin community in our case generally is (we’re very lucky and grateful for this). Individuals go through data items and one by one select whether to keep, discard or modify the data.

Once the data goes through this process, you end up with something clean on the other end. Of course, there are more intricacies involved here, for example, you need to ensure that that bad actors who are trying to botch your clean up process are weeded out, or their inputs are discarded. You can do that in a series of ways, and everyone does it a bit differently. You can screen people on the way in, you can build some sort of internal clean up consensus model so that thresholds need to be met for data items to be kept or discarded, etc. We’re doing a blend of both, and I guess we shall see how effective it is in the coming months.

Now…once you’ve got this beautiful clean data out the end of this “pipeline”, you then need to format it once more in preparation for “training” a model.

This final stage is where the GPU’s come into play, and is really what most people think about when they hear about building language models. All the stuff I’ve covered up until now is generally ignored.

This home stretch involves training a series of models, and playing with the parameters, the data blends, the quantum of data, the model types, etc. This can quickly get expensive, so you best have some damn good data and you’re better off starting with smaller models and building your way up.

It’s all experimental, and what you get out the other end is… a result…

It’s incredible the things we humans conjure up. Anyway..

Our result is still in the making, and if you would like to be involved, we have a couple ways you can do it.

You can help us collect and curate the most relevant data for the model. We’re doing it here at the Nakamoto Repository. This is repository of every book, essay, article, blog, YouTube video and podcast about and related to Bitcoin, and peripherals like Nietzsche, Spengler, Peterson, Hoppe, Rothbard, Jung, the Bible, etc.

You can search for anything and access the URL, text or PDF. If you can’t find something, or feel it needs to be included, you can “Add” a record. That’s where we’ll need your help. If you add junk though, it won’t be accepted, so don’t be an asshole and waste both our time.

If you can submit the data as a .txt file, along with the link, it would be ideal.

You can actually help us clean the data, and earn Sats!! Remember that missionary stage I mentioned? Well this is it. We’re rolling out a whole toolbox as part of this, and you’ll be able to play “FUD Buster” and “Rank replies” and all sorts of other things. For now, it’s like Tinder-esque Keep/Discard/Comment on data interface to clean up what’s in the pipeline.

This is way for people who’ve spent years learning about and understanding Bitcoin, to transform this “work” into “Sats.” No you’re not going to get rich, but you can (a) help contribute toward something you might deem a worthy project, and (b) earn something along the way.

If you’re interested in that, you can join here: Help Train Satoshi

So there you have it. An update and overview all rolled into one. Hope you learned something, and if you ever wanted to actually be involved in the building of such a program / machine / product, please join us :)

What else is new?

My thinking…

Well - this is not so much “new” as it is an evolution and confirmation of my earlier thinking. In my first few essays I argued that artificial intelligence is a flawed term, because while it is artificial, it’s not intelligent - and furthermore, the fear porn surrounding AGI was completely unfounded, because there is literally no risk of this thing becoming spontaneously sentient and killing us all. A few months on and I am even more convinced of this.

I think back to

’s excellent article “I’m bored of Gen Ai” and he was so spot on.There’s really nothing magical, or intelligent for that matter, about any of this AI stuff. The more we play with it, the more time we spend actually building our own, the more we realise there’s no sentience here. There’s no actual thinking or reasoning happening. There is no agency. These are just “probability programs.”

The way they are labelled, and the terms thrown around, whether it’s ‘Ai’ or ‘machine learning’ or ‘Agents’ is actually where most of the fear, uncertainty and doubt lies.

These labels are just an attempt to describe a set of processes, that are really unlike anything that a human does. The problem with language is that we immediately begin to anthropomorphise it in order to make sense of it. And in the process of doing that, it is the audience or the listener that breathes life into the Frankenstein monster.

AI has NO life other than what you give it with your own imagination. This is much the same with any other imaginary eschatological threat

{insert climate change, aliens, or whatever else is going on on Twitter / X}.

This is of course very useful for globohomo bureaucrats that want to use any such tool/program/machine for their own purposes. They’ve been spinning stories and narratives since before they could walk, and this is just the latest one to spin. And because most people are lemmings and will believe whatever someone who sounds a few IQ points smarter than them has to say, they will use that to their advantage.

I remember talking about regulation coming down the pipeline. I noticed that last week or the week before, there are now “official guidelines” or something of the sort for Generative Ai - courtesy of our bureaucratic overlords. What this means, nobody really knows. It’s masked in the same nonsensical language that all their other regulations are. The net result being once again; “we write the rules, we get to use the tools the way we want, you must use it the way we tell you, or else.”

The most ridiculous part is that a bunch of people cheered about this, thinking that they’re somehow safer from the imaginary monster that never was. In fact, they’ll probably credit these agencies with “saving us from AGI” because it never materialised.

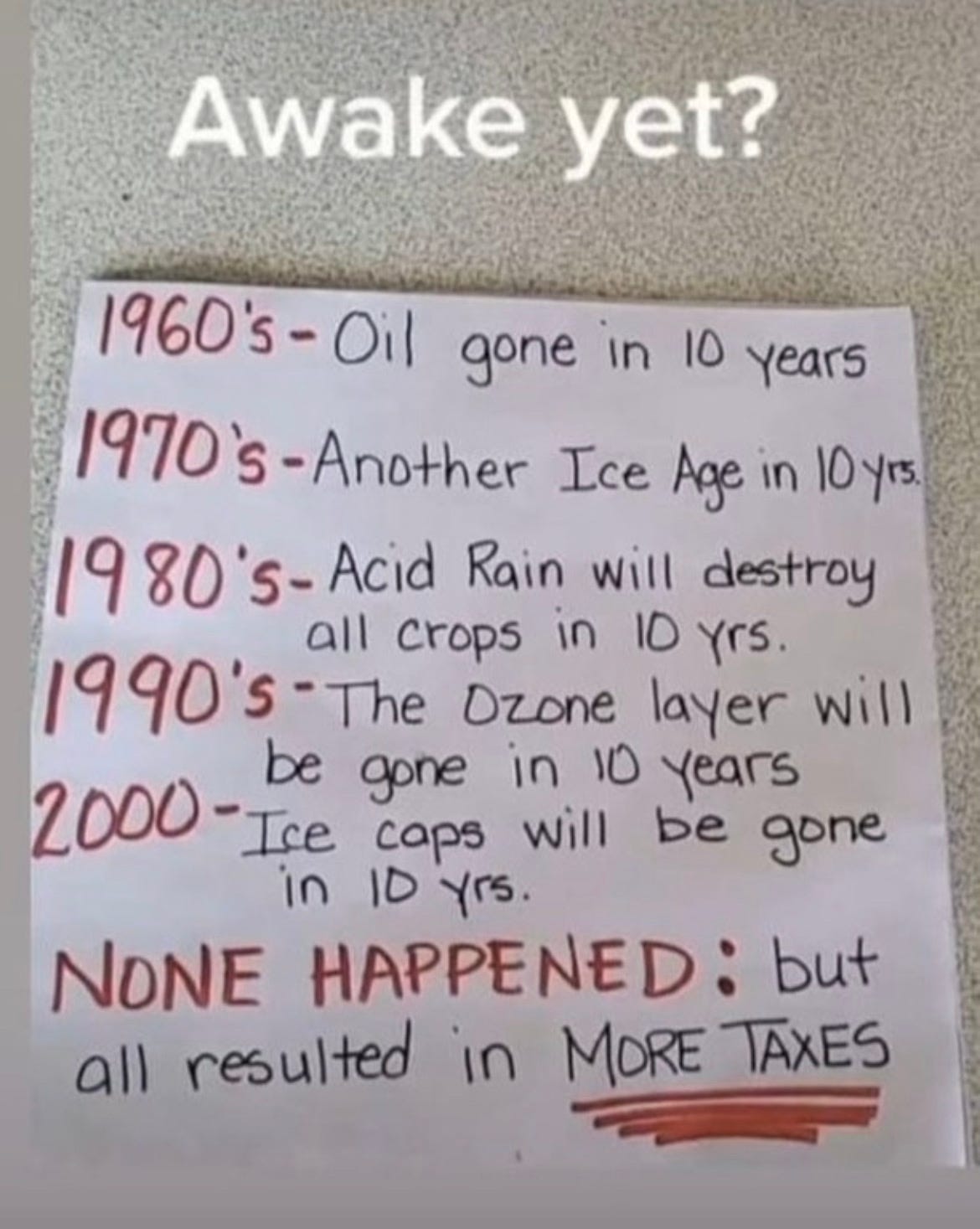

Reminds me of this:

When I posted that on Twitter, the amount of idiots who genuinely believed that the avoidance of these catastrophes was a result of increased bureaucratic intervention, told me all I needed to know about the level of collective intelligence on that platform.

Nevertheless. Here we are. Once again. Same story, new characters.

Alas - there’s really little we can do about that, other than to focus on our own stuff. We’ll continue to do what we set out to do.

I’ve become less excited about “Gen-AI” in general, and I get the sense that a lot of the hype is wearing off as people’s attention moves onto Aliens and Politics again. I’m also less convinced that there is something substantially transformative here - at least to the degree that I thought six months ago. Perhaps I’ll be proven wrong. I do think these tools have latent, untapped potential, but it’s just that - latent. I think we have to be more realistic about what they are (instead of artificial intelligence, it’s better to call them probability programs) and that might actually mean we spend less time and energy on pipe dreams and focus more building useful applications. In that sense, I do remain curious and cautiously optimistic that something does materialise, and hopeful that we can take part in that.

To that end also, I think I might scale back my writing on the philosophical side of this newsletter, because I don’t know if much more needs to be said. I had essays half written about Consciousness, Computational Theory of Mind and all sorts of other things, but I’m not sure any of that really matters or is pertinent. If you think otherwise and would like me to write about that, please comment below.

If not, I think I will do a monthly or bi-monthly post talking more about the work we’re doing, the practical discoveries we’re making and *IF AND WHEN* I get the inspiration or have made some sort of philosophical discovery, I can go on a rant about that.

For now, I shall leave you all to your day, and hope this was a useful 10min of your weekend.

I'm going out onto a limb here. Calling Artificial Intelligence "pee-pee" is, probably, going to generate much hilarity. I myself cannot stop smiling about it.

Very useful information! Thank you. Personally, I use LLM as a nextgen search engine and grammar checks. I'm from the south and speak in past participles.